In Elon Musk’s 2018 Leaked Tesla Memo, He Lays Out 7 Blunt Rules for Getting More Shit Done:

In Elon Musk’s 2018 leaked Tesla memo, he lays out 7 blunt rules for getting more shit done:

If you liked this, you might like this from our AI-focused page: x.com/realBigBrainAI…

Pioneer of causal AI, Judea Pearl, argues that no amount of scaling will get LLMs to AGI.

He believes current large language models face fundamental mathematical limitations that can’t be solved by making them bigger.

“There are certain limitations, mathematical limitation that are not crossable by scaling up.”

His core argument: LLMs don’t learn how the world works. They learn from *human interpretations* of how the world works.

“What LLM’s doing right now is they summarize world models authored by people like you and me available on the web and they do some sort of mysterious summary of it, rather than discovering those world models directly from the data.”

He illustrates this with healthcare data.

When hospitals collect data on treatment effects, that raw data never reaches the LLMs.

Instead, the models consume doctors’ written interpretations. Analyses shaped by people who already have a mental model of how disease and treatment work.

In other words, LLMs are learning from the map, not the territory.

The missing piece, according to Pearl, is causal reasoning — the ability to understand not just *what* happens, but *why*.

And he’s clear this isn’t a gap that more parameters or training data will close.

It raises a uncomfortable question…

If AGI requires machines that build their own world models from raw data rather than summarising ours, are we even on the right road?

Tweet from realBigBrainAI

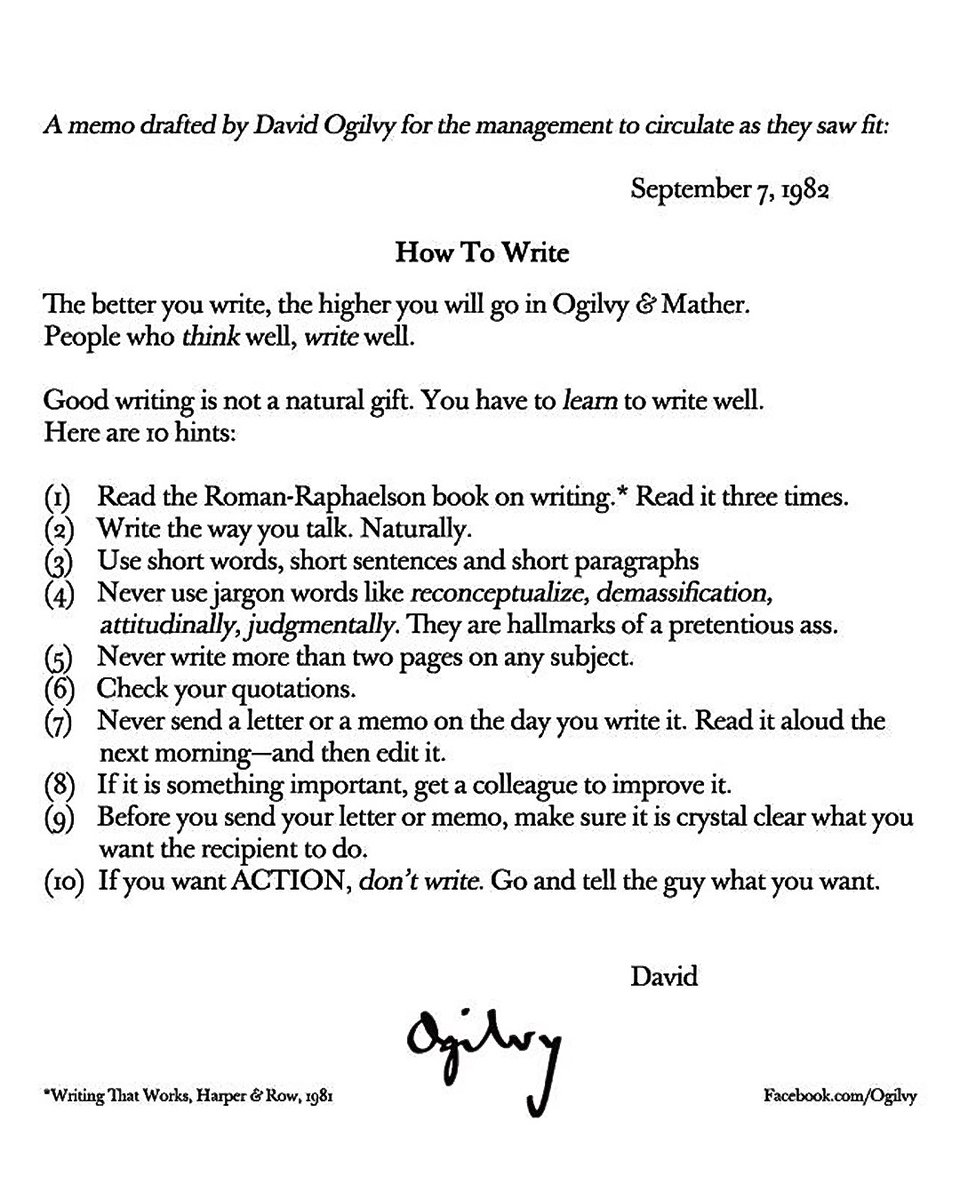

In 1982, the Father of Advertising wrote an internal memo titled “How to Write.”

David Ogilvy built a $1B+ agency and created campaigns for Dove, Rolls-Royce, and American Express.

His 10 rules still work for ads today.

Here’s a breakdown of each one:

Tweet from BigBrainMkting